Data Sonification

It is the transformation of relationships between data into perceivable relationships in the acoustic signal, in order to facilitate communication or interpretation».

International Community for Auditory Displays (ICAD)

music from yourself

Relaxing Music Digital Platform

Turning Cognitive Unconsciousness to Instant music

Thanks to the instant evaluation of the emotional state by AI process, that in neuroscientific scenario is named “Cognitive Unconscious”, the user can immerse himself in a sound experience of “Healing Music”, deep sounds from his own emotional patterns. From emotional states by “correspondence” values, thus determining dynamic soundscapes, which in turn responds in an estimated time to our emotional states,all is processed by AI algorithms.

We strongly believe that this is the key to increase the overall perceived wellness and to support a more mindful daily state. Indeed, despite the huge efforts spent in understanding emotions, we are often too distracted to truly understand what we are feeling in a given moment. This disconnection between our inner ego and ourselves is more and more resulting in the increase of stress level, with all its consequences.

We believe that the first step towards a reduction of stress level goes through the understanding of the feelings we are experiencing in a given moment.

We designed an affective computing system able to analyze subjects emotional state, transliterating it to an emotional experience that allow peoples to feel their feelings trough their senses.

Each listening session is never the same as another and is always different for each user, and aims to:

calm the Arousal levels to enhance focus

increase the level of attention

determine a state of relaxation and mental well-being

enhance the performance of concentration in progress

Counteracting cognitive impairment

«Engagement with music affects the inter-subject correlation of brain responses during listening»

Madsen, Margulis, Rhimmon Simchy-Gross, C. Parr.

Nature

Emotional Dataset

Through the instantaneous detection of galvanic data (EDA), sound patterns will be activated guided by an emotional dataset, which interacts with the Cognitive Unconscious. The result is a personalized soundtrack, musical landscapes for mindfulness .

Music Design

The core of our system is an Artificial Intelligence module designed and trained to cope with this high variability, making possible to obtain real-time subject’s emotional shades from the biometric human values.

As the music is generated based on feelings, each time the felt experience is new and different, allowing people to reconnect with their inner ego

Thanks to the instantaneous evaluation of emotional states, an aesthetic experience of listening to human biometric signals is born (432hz).

The music is designed on your interior performance.

Deepsound (me) is also a music creation tool, where composers are invited to generate musical solutions based on emotional patterns elaborated by an AI dataset, processed on multiple data of human origin, anonymous, emotional parameters experienced in different fields of application (art experience, Yoga session, creative job, etc…)

Listen

Artificial Intelligence

Input HD

AI

Music Experience

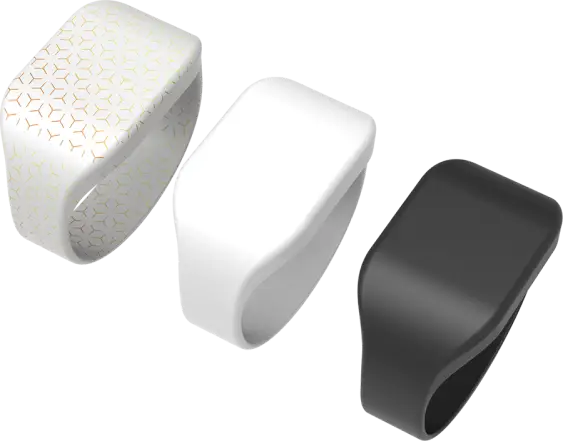

An original artificial intelligence software processes biometric data from smart-rings or smart-watches. The instantaneous music produced by the emotional patterns acts on vigilance and visual attention.

Blockchain

Human data are transformed in original soundtracks and fixed in customized NFTs.

From the human to the web3 the path to mental well-being, in our philosophy of innovation, represents a new approach to reading the inner life.

A creative process that aims primarily at enhancing human potential through the use of AI technology by involving the subject, with his or her instantaneous biometric parameters, in the moment in which he or she experiences a work of art. A ‘hybrid model’ which aims at proposing new paradigms of collaboration between human beings and artificial intelligence, focusing on the human factor as the origin of the experience itself and giving back a representation of the performance of our cognitive In-conscious.

The contemplation of a work of art represents an area of great interest for neuroscience. In the space between individual and image, an enormous amount of data is generated in a few tenths of a second. In this space, between the art object and the observer, our level of attention (emotional arousal) prepares for the onset of emotions. The experience of art thus becomes the locus of our emotional awareness

Smartwatch / Rings

Art experience

During the observation of art, our brain produces bio data and returns information such that, in scientific disciplines such as neuroscience, this area is often investigated to access the secrets of human consciousness.

The instant music helps the observer to improve the visual approach and creates unique moments of true symbiosis with the artistic message.

New era for the museum experience is just open.

It is a new audio guide paradigm for museums, based on artificial intelligence and content personalisation.

More than 33 thousand sound combinations that personalize the art lover’s experience, based on instant physiological data.

HealingMusic, on frequencies of 432Hz, which promotes attention and immersion in the vision of the art.

For each journey, narrating voices and soundtracks create a unique and unrepeatable experience

Team

Martino Cortese

Angel Investor

© 2023 Innereo srl PIVA 09772491214 - Privacy Policy - Cookie Policy

Made with ✨ by Nicole Curioni web.design